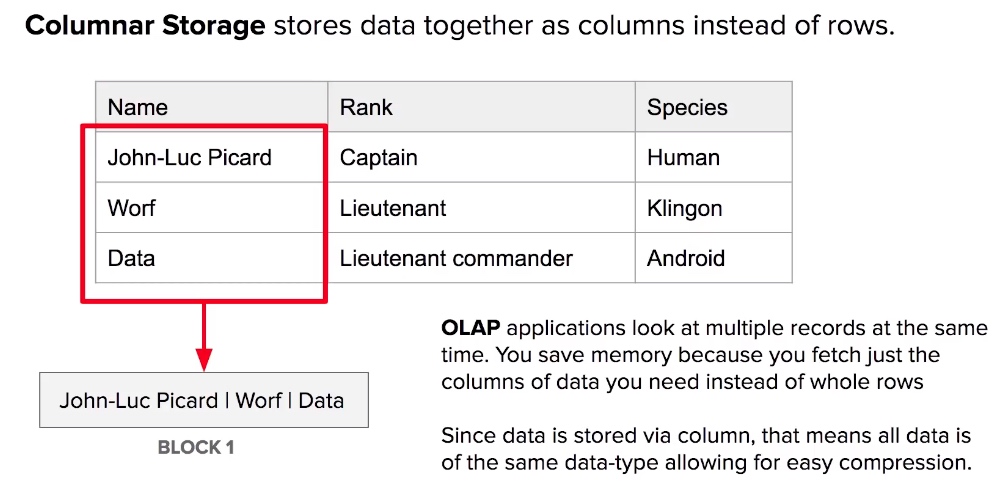

It is a ~35 page document with powerful techniques to leverage the use of MPP columnar stores. I bet you will find it incredibly helpful. The question I have is about what is the best practice for loading a star schema in Redshift?īe sure to take a look into this resource. I have researched a lot for the best way to deal with it and found an amazing helpful source of techniques we should definitely apply when working with MPP. It is to build ETL process and design dimensional model. I am currently dealing with a similar task. I do not have a formula for when to use many Dimensions vs a flat wide table, the only way is to try it and see! The reason for this is that the columnar approach lets Redshift compress the different values down to a level that is pretty efficient. Note that Redshift sometimes works BETTER if you have a wide table with repeated values rather than a fact and dimensions. Do not transform ! - similar to 1) but just use the tables that have.this is also the approach taken if you use AWS Glue and load the dims and facts into redshift spark->s3->redshift. "ETL pattern" - Transform the data in flight, using apache spark.This process if you want to - such as Matillion (i do not recommend You can use third part cloud based tools to "simplify" redshift) Then, onĪ regular basis run sql processes within redshift to populate dims Source (e.g.mysql or postgres) to a target (e.g. Recommend using "AWS data migration services", which can sync a For this you canĮither load to s3, then use redshift copy command or I would Transformations until the data has been loaded. "ELT" pattern - Load the source tables to redshift fully, do not do any significant.There are a number of patterns for this, I have used them all in different use cases You are definately on the right track with Kimball rather than inmon for Redshift.

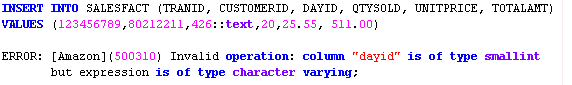

Is this what others are currently doing? Is there an ETL tool worth the money to make this easier? I'm leaning toward importing my files from S3 into staging tables and then using SQL to do the transformations such as lookups and generating surrogate keys before inserting into the destination tables. The question I have is about what is the best practice for loading a star schema in Redshift? I cannot find this answered in any of Redshift's documentation. There is, however, this recent blog post from the AWS Big Data blog about how to optimize Redshift for a star schema: My experience has always been in using dimensional modeling and Ralph Kimball's methods, so it was a little weird to see that Redshift doesn't support features such as the serial data type for auto incrementing columns. To test this hypothesis, I attempted to apply a filter on an "int" column data type to see whether the query folders correctly, the query folds correctly.I have been researching Amazon's Redshift database as a possible future replacement for our data warehouse. The column type that I am trying to filter in the datbase is "string" That's because the SQLGetInfo step cannot get any information, it's unsearchable. I cannot find any other information around this.įrom what I can find, I think, but am not too sure, that the column type in Redshift is causing this issue. However, this Redshift driver is provided by Microsoft. When searching for these errors, all support relates to custom-ODBC drivers. Message: Exception of type '.FoldingFailureException' was thrown.Īt .(ColumnAccessQueryExpression expression)Īt .(InvocationQueryExpression expression)Īt .(InvocationQueryExpression expression)Īt .(IList`1 selectItems, IList`1 queryExpressions, Keys keys, IValueReference typeValues, List`1 newColumns, OdbcQuerySpecification newQuerySpecification, Boolean allowAggregates, Int32 groupKey, IList`1 tableKeys, Boolean ignoreRange)Īt .Odbc.OdbcQuer. You can override the supported data types from ODBC driver using SQLGetTypeInfo.Īfter these, I recieve a OdbcQueryDomain: ReportFoldingFailure, as such:ĮxceptionType: .FoldingFailureException, Microsoft.MashupEngine, Version=1.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35 I recieve the following OdbcQuery: Folding Warning messages:ĭata Type of column with searchable property UNSEARCHABLE should be SEARCHABLE or ALL_EXCEPT_LIKE. However, the query is not folding when I use filters. I am loading in a table and applying a filter on a column called "city". I am using the built-in Redshift ODBC connector and am expecting my query to fold. Read on below to follow my train of exploration to this Q. I have checked Simba docs, I cannot find an answer there. When applying filters, what data types will (and will not) result in query folding through the Redshift ODBC Driver? But I believe that my question is the following: At first, I didn't know what the question was.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed